This article was co-authored by David Barron and Sean McKenna

The siren song of optimization

Every era of technological progress has its tempting siren song — an alluring tune promising advancement if we follow its call. Today, that song is optimization driven by Artificial Intelligence (AI). AI, with its power to automate, predict, and accelerate processes, invites organizations to eliminate friction, reduce costs, and achieve new levels of efficiency.

But like the sailors of myth, those who chase too closely after the promise of optimization without consideration of where they’re sailing often end up dashed against the rocks. When optimization becomes an end in itself, rather than a means, it can erode trust, alienate customers, and weaken the very value that AI was supposed to improve.

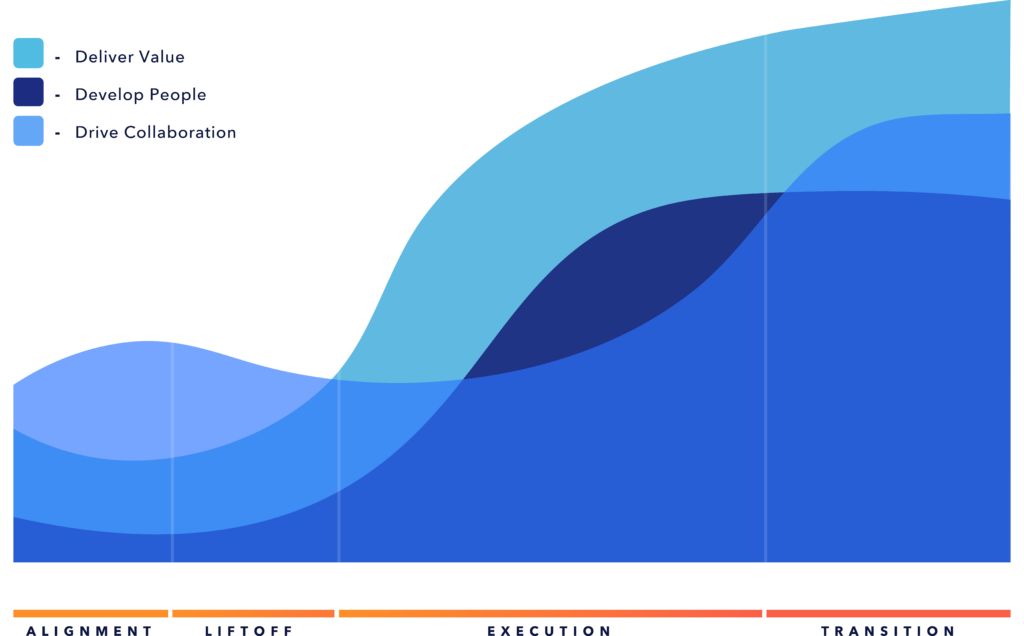

Gartner speaks to the application of Generative AI (genAI) through the lens of a simple model: Defend, Extend, Upend (Maybe). The “Defend” position seeks to augment productivity with AI. “Extend” embeds genAI into “specific processes” seeking efficiency improvements. “Upend” leverages genAI to transform industries. As organizations mature their AI journey, they’re building and deploying more solutions in the “Extend” realm. However, our research across various industries reveals a common theme: pursuing AI-driven efficiency by replacing human effort with AI solutions often neglects the human system within which those solutions operate. When these “Extend” solutions fall short, it’s not due to a technological failure but a design failure.

We have chosen to focus on the Defend and Extend parts of Gartner’s model because we believe these AI integration points are where hyper-optimization and automation are most likely to happen. When in Upend mode, businesses undergo major transformations and are more likely to stay focused on customers and end-users to successfully navigate a transformational shift.

Case Studies: When AI-driven optimization goes wrong

The rental car damage scanner: Automation without judgment

When a major car rental company deployed AI-powered damage scanners across rental locations, the goals were clear: reduce disputes, speed up inspections, and automate the subjective process of vehicle assessment. Goals achieved! Along with the unexpected bonus result of public outrage. The AI’s pattern-recognition system was so effective that it didn’t miss a thing, resulting in automated claims for hundreds of dollars for some customers (CBS News). One customer was billed $440 for a small wheel scuff—including $190 in administrative and processing fees (Car and Driver).

Devoid of human oversight, the system treated customers as data points rather than as people. The efficiency gains were real — but so was the reputational damage. The technology succeeded in identifying anomalies; however, it failed in understanding the context. Even though 97% of customers were reportedly not billed for damages, the 3% that were created enough negative feedback to warrant multiple articles spotlighting the undesired experience, only a few had with some publicly stating they would have chosen another brand had they known the AI solution was in use.

Fast food AI drive-through: When voice recognition meets real life

A major fast food chain’s partnership with IBM, which began in 2021 and lasted until 2024, aimed to test AI voice ordering at drive-throughs. The partnership aimed to speed up the drive-thru process, increase order accuracy, generate more revenue, and reduce costs (Museum of Failure). Instead, it became a viral example of over-automation gone wrong. The system often misheard orders—adding bacon to ice cream or ringing up 260 chicken nuggets. Customers laughed, recorded videos, and shared their frustration on social media, leading to the project’s abandonment after three years.

The goal was to optimize transaction time. The outcome was a global case study showing what humans have always known – if you cannot understand your customer, then you, AI, or human, cannot serve them.

Global shipping and the paradox of dehumanized service

In a LinkedIn post, a user shared their real-world example of AI-driven over-optimization. A delivery truck driver accidentally backed over and destroyed a brick mailbox. Realizing what had happened, the driver persistently tracked down the homeowner to take accountability for their mistake. However, the remediation required the homeowner to report the damage using the shipper’s automated customer service system (CIO), which couldn’t process property damage reports. It only offered shipment claim options and required an 85-minute wait to speak with a human agent. The driver’s integrity was overshadowed by a system designed for volume, not understanding.

This contrast reveals a truth: humans within the system often outperform the systems designed to replace them. Why? Even with incredible advances in Large Language Models (LLMs) and genAI, enabling human-like interactions, the technology still requires implementers to install controls to prevent the AI from going astray. In the end, the automated customer service system (siren) was simply incapable of managing damage reports (wind), took a customer down a lengthy path to resolution (rocks), and invited publicly available scrutiny (crash).

A Glimpse at success: Netflix and healthcare

Not all optimization is blind. Netflix’s recommendation engine accounts for more than 80% of content streamed on its platform, yet its effectiveness relies on human curation. Editorial analysts tag and describe content to enhance machine learning, ensuring personalization feels natural rather than robotic (GrowthSetting).

In healthcare, “human-in-the-loop” AI has decreased diagnostic errors by nearly 30%, but importantly, doctors still make the final decisions. Transparency, trust, and oversight ensure the system functions effectively (Complere Infosystem).

The contrast is stark: when AI augments human judgment, outcomes improve; when it replaces it, failures compound.

Friction isn’t the enemy

In the age of AI, friction is often viewed as a sign of failure — a symptom of inefficiency or poor design. But some friction serves a purpose. It helps relationships form and deepen, trust develop, and empathy grow.

Think about the rental car customer charged for minimal damage that did little to affect the rentability of the vehicle or the value of the company’s asset. That moment of friction — where a human would pause, inspect the car, and listen to the customer’s story — was taken away. The efficiency gain came at the expense of understanding. In removing friction, the rental car company removed the flexibility and adaptability, giving customers a voice.

Here’s a paradox: the few interactions that fail can outweigh the many that succeed. A single viral post, a frustrated customer with a large audience, or a journalist uncovering systematic unfairness can wipe out millions in AI-driven savings. When organizations dismiss edge cases as statistical noise, they overlook the fact that human systems are inherently nonlinear — emotionally, reputationally, and socially.

As we explore in the article, AI and the Human Experience: For you or to you?, friction can indicate where systems should connect more deeply with people, not less.

Automation ≠ absolute improvement

Many optimization efforts don’t actually eliminate work — they shift it.

AI systems often transfer cognitive or emotional labor from employees to customers. Think about chatbots that reduce the customer service workload for companies by passing it on to users, or HR systems that require candidates to complete long self-assessments that used to be handled by recruiters. The solutions do indeed drive efficiencies for the organizations that created them. However, we find that it’s all too easy to forget about how customers experience those efficiency gains. In forgetting these perspectives, efficiency gains can lead to a loss of trust and loyalty.

As one study from ISG found, AI-driven customer service fails four times more often than other AI applications, and 75% of customers still prefer speaking with humans (ISG One). Customers aren’t rejecting AI — they’re rejecting poor design that leaves them with unmet needs.

In a world obsessed with doing more faster, improvement requires reframing what “better” means. Sometimes, the right optimization is restraint.

Guidance: Designing AI that balances efficiency and empathy

Our research and experience across industries indicate that successful AI initiatives have one key trait: they are human-centered systems, not just technical implementations. Here’s a practical framework for executives to assess where optimization might be beneficial — or detrimental.

Use systems thinking to map impacts

Every optimization impacts a larger system. Before automating, map not just the workflow but also the relationships, incentives, and trust structures surrounding it. Ask: If this system runs perfectly, who might it inadvertently harm? Systems thinking shifts AI from a fast tool to a strategic instrument — a theme we’ll explore more deeply later in this series.

Ask: What work is removed, moved, or added?

Automation rarely eliminates work; it redistributes it. Track where human effort shifts when AI is introduced. Does it move downstream to the customer, or upstream to a frustrated employee who now must explain machine decisions? This analysis shows whether your AI adds real value or creates hidden burdens.

Evaluate optimization in terms of outcomes, not just cost or speed

Leaders should prioritize clarity in customer experience, ethical integrity, and long-term trust — not just metrics. Ask: Are we defending what exists, extending to the point of over-optimization, or are we expanding and transforming toward something better? Upend and dare to reimagine the system itself.

Revisit Christensen’s Job To Be Done

As Clayton Christensen’s theory reminds us, people “hire” products and services to do specific jobs. AI systems often fail because they optimize for the wrong job — the organization’s focus on efficiency rather than the customer’s need for understanding. Before optimizing, ask: What job is this experience really being hired to do? When AI solves the wrong problem efficiently, it fails completely.

Keep humans in the loop

Human-in-the-loop (HITL) design is more than just a safeguard — it’s a partnership model. Make sure that employees can override, question, or improve AI outputs. The best systems combine human judgment with machine speed, using ongoing feedback to enhance both. Netflix and healthcare AI systems show that oversight doesn’t slow down innovation — it supports it.

Design for trust and transparency

Transparency isn’t optional. Employees and customers must understand how AI decisions are made, when they can appeal, and who is responsible. Trust is the foundation of digital transformation; once lost, it’s expensive to rebuild. Ensure AI-driven optimization has the right sails to navigate away from the rocks; or, put differently, build the off-ramp to the human path and ensure your customers can access it easily.

Conclusion: Efficiency is a means, not a mission

Optimization will always be part of progress. However, optimization must serve a purpose rather than replace a purpose. When AI is pursued simply for its own sake, it often leads to frustration, failure, and a loss of trust. However, when guided by empathy, clarity, and systems thinking, AI transforms into something much more powerful — a force that helps humans do their best work and make smarter decisions.